MIT Happy Robot: Real-Time Affective Interaction & Social Robotics

Overview

MIT Happy Robot was an interactive demo project developed at the MIT Media Lab exploring how automatic emotion recognition can be coupled with expressive robotic behaviors to create engaging, affect-aware human–robot interactions.

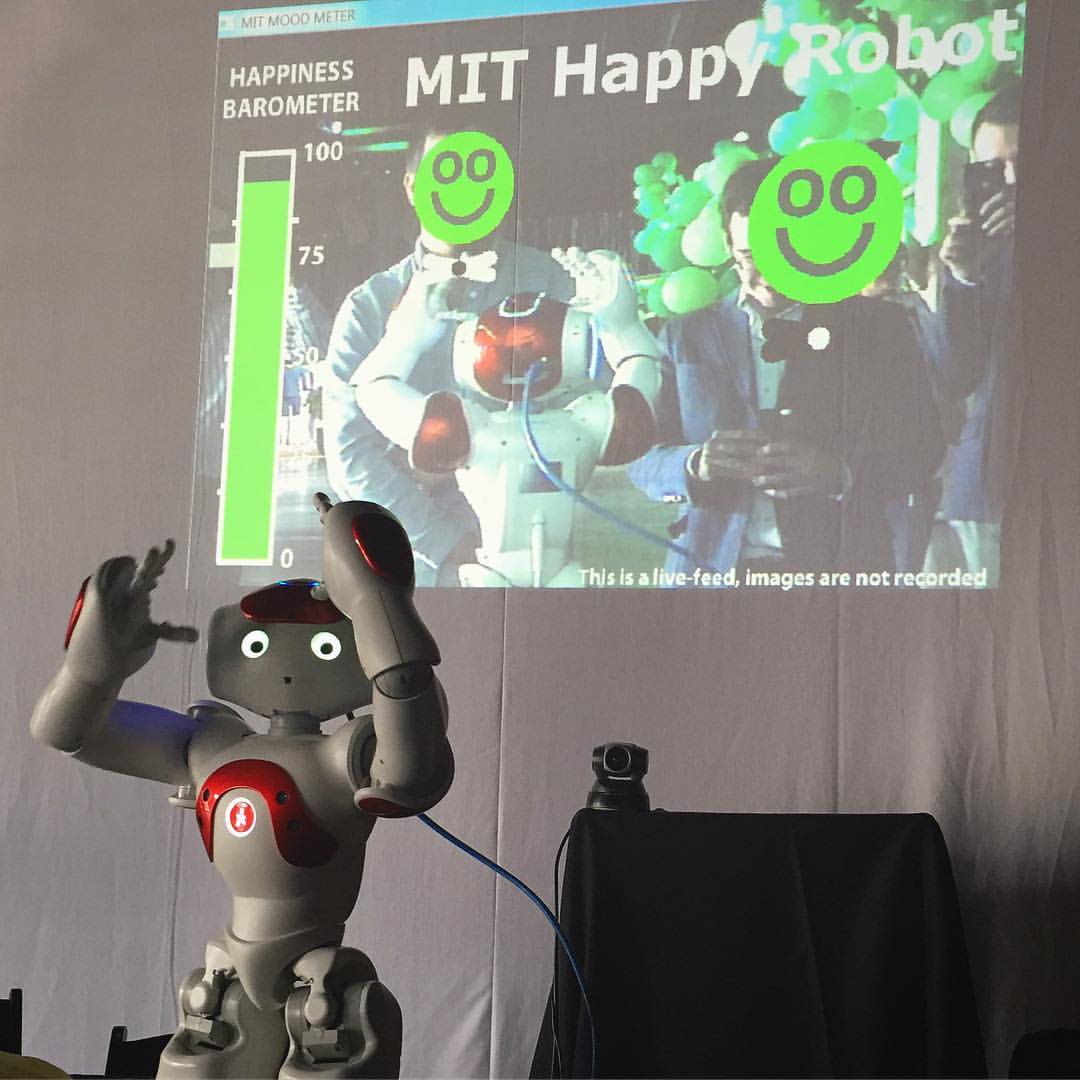

The system used a humanoid robot to perceive a person’s emotional state from their facial expressions in real time and respond with appropriate social behaviors (such as dancing, gesturing, or vocal expressions) designed to elicit engagement and positive affect. The project served as a proof-of-concept for integrating computer vision–based affect recognition, decision logic, and robotic actuation into a cohesive interactive experience.

Emotion Recognition and Affective Interaction

At the core of the system was a facial emotion recognition pipeline that analyzed visual cues from a camera feed to infer basic affective states (e.g. happiness, sadness, surprise). These inferred emotional signals were mapped to high-level interaction strategies, allowing the robot to dynamically adapt its behavior in response to the user’s perceived emotional state.

The emphasis was on responsiveness, robustness, and real-time interaction, demonstrating how affective signals can be used to drive socially meaningful robot behaviors in unconstrained, public-facing settings.

Robotic Behaviors and Engagement

The robot was programmed with a repertoire of expressive behaviors (including movements, gestures, and playful actions) that were triggered based on the detected emotional state. For example, the robot might respond to a smiling face with a celebratory dance or attempt to re-engage a disengaged user through exaggerated motion or sound.

This closed-loop perception–action system illustrated how even relatively simple affective models, when tightly integrated with embodiment and timing, can produce compelling and intuitive human–robot interactions.

Takeaways

MIT Happy Robot demonstrated how:

- Facial affect recognition can be integrated into real-time robotic systems

- Simple affect-to-action mappings can produce engaging social behaviors

- Embodied interaction amplifies the perceived intelligence and warmth of AI systems

The project helped inform later work on affective computing, human-centered AI, and adaptive agent behavior, highlighting the importance of closing the loop between perception, decision-making, and action in interactive AI systems.

World Happiness Summit

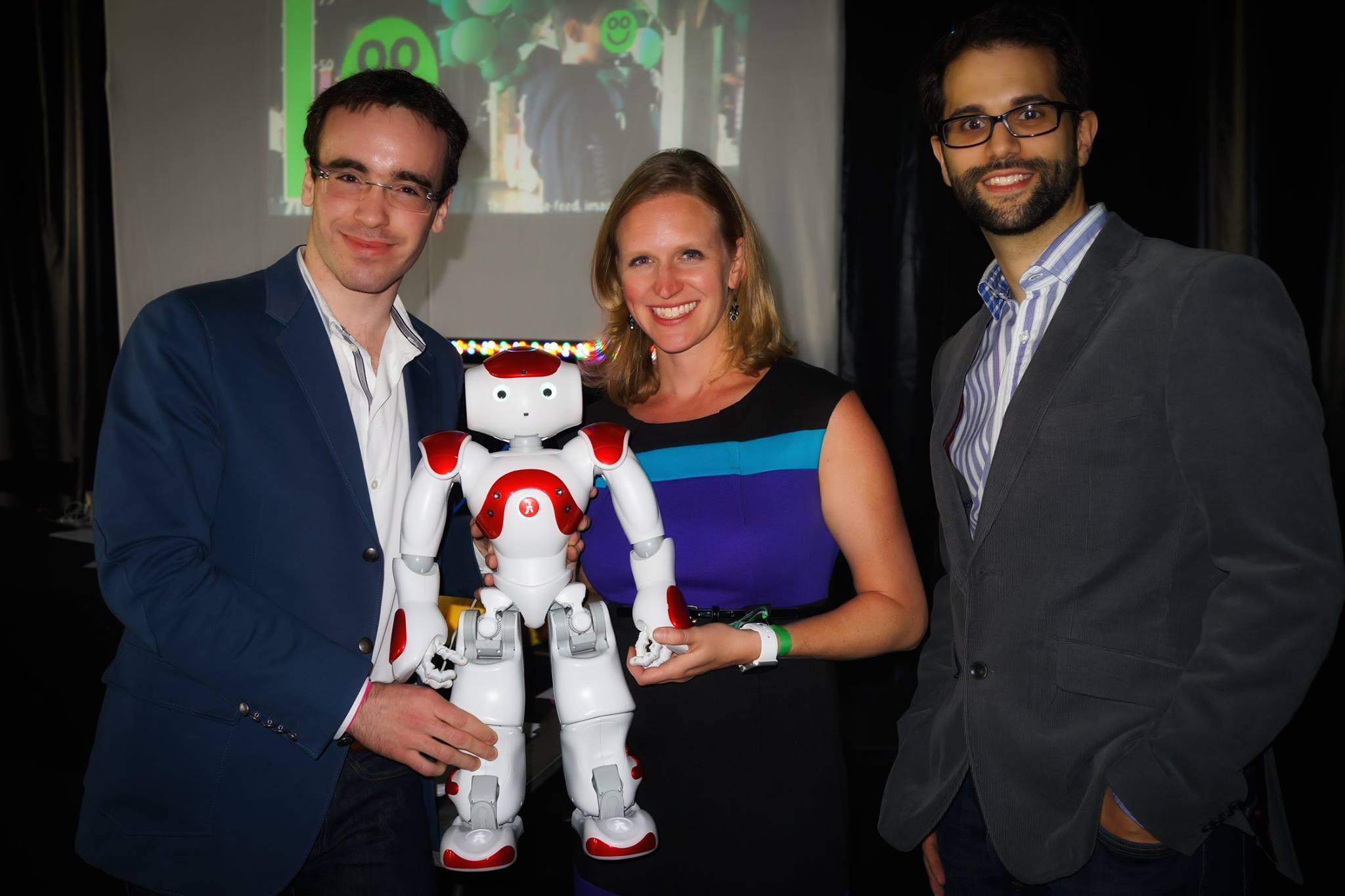

This project was demonstrated live at the World Happiness Summit (Miami, March 2017) and developed as joint work with Ognjen Rudovic and Javier Hernandez.

Video Demo

Photos